I helped Staffbase to validate, pivot, and scale a high-risk analytics product

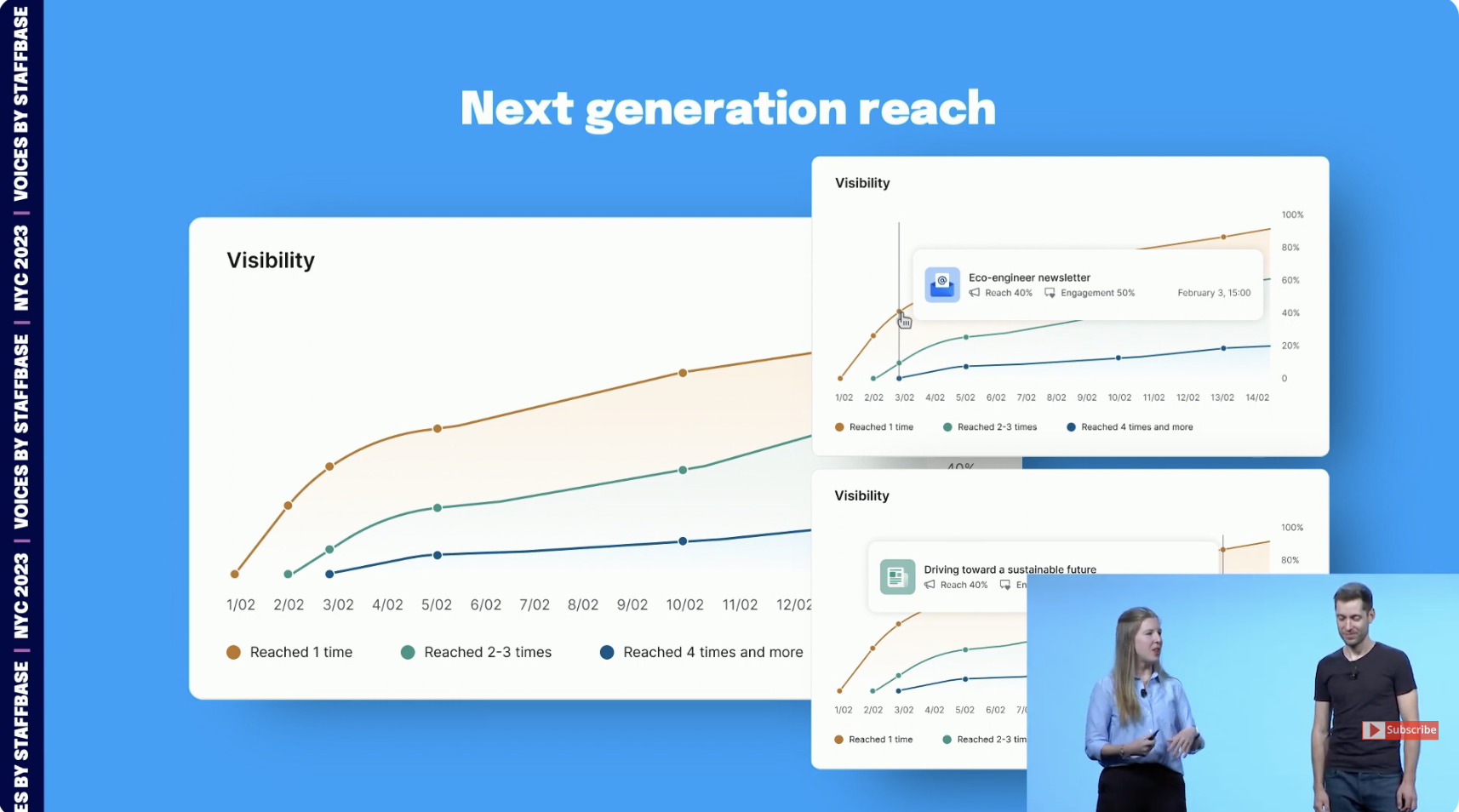

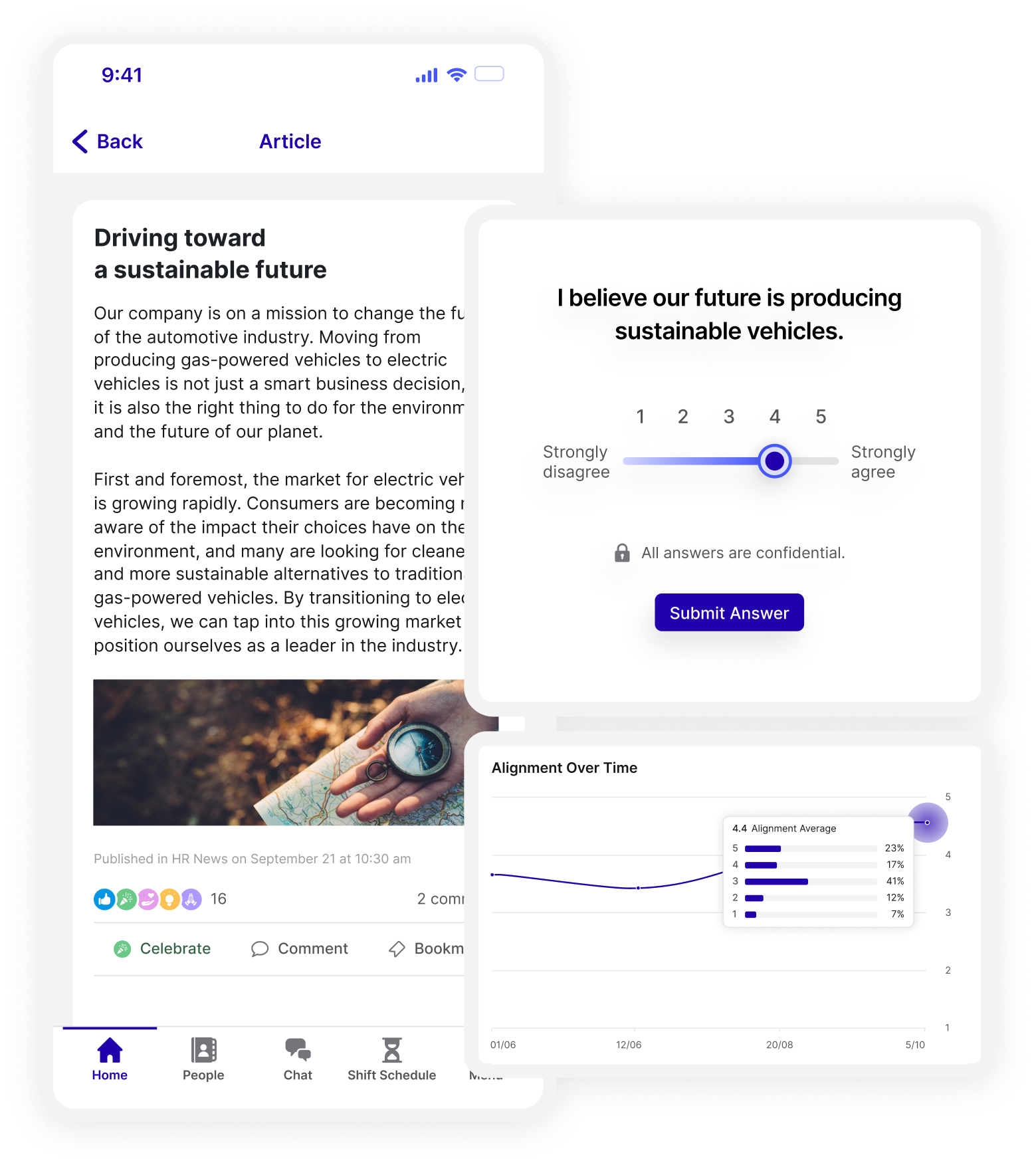

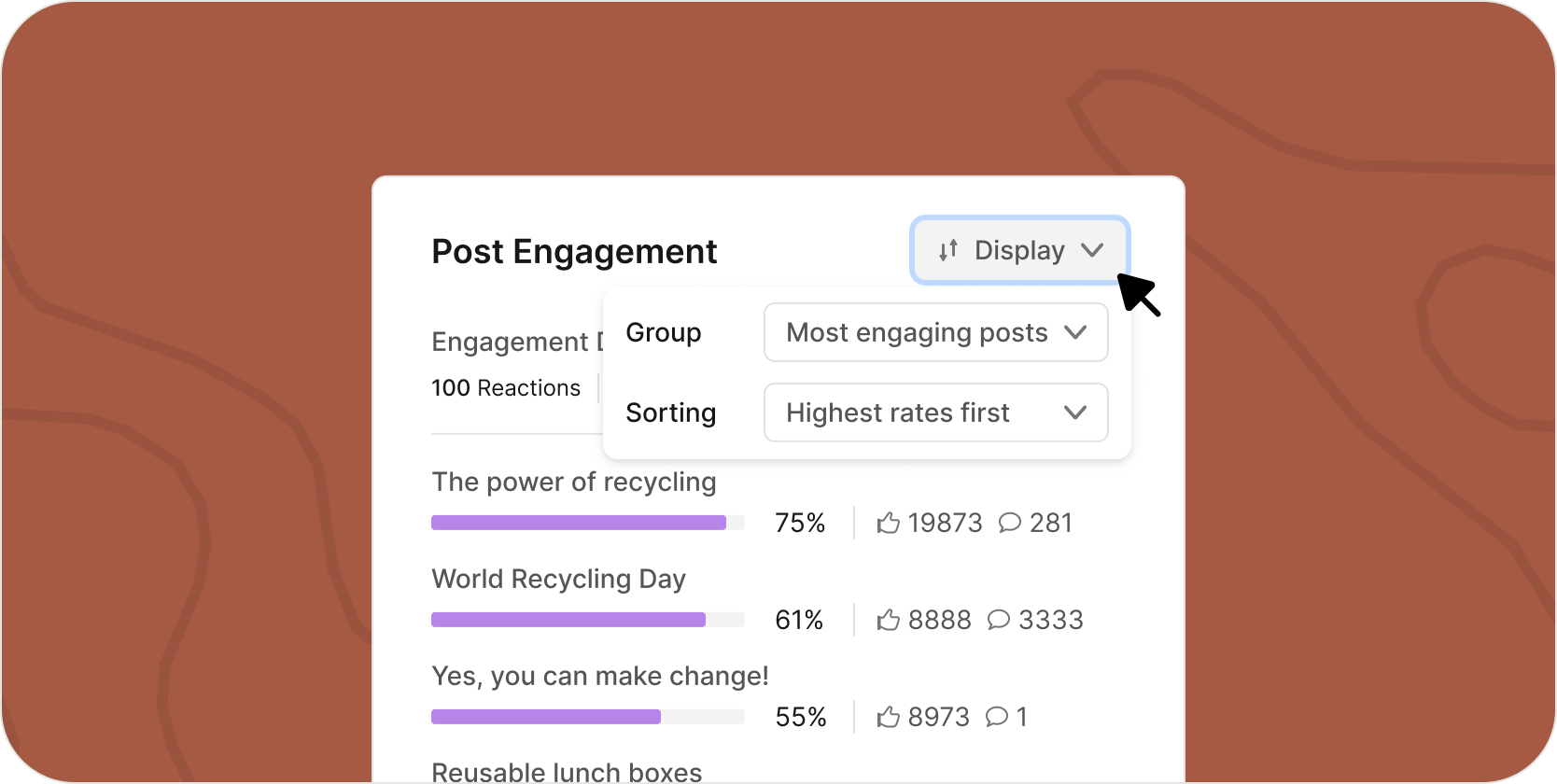

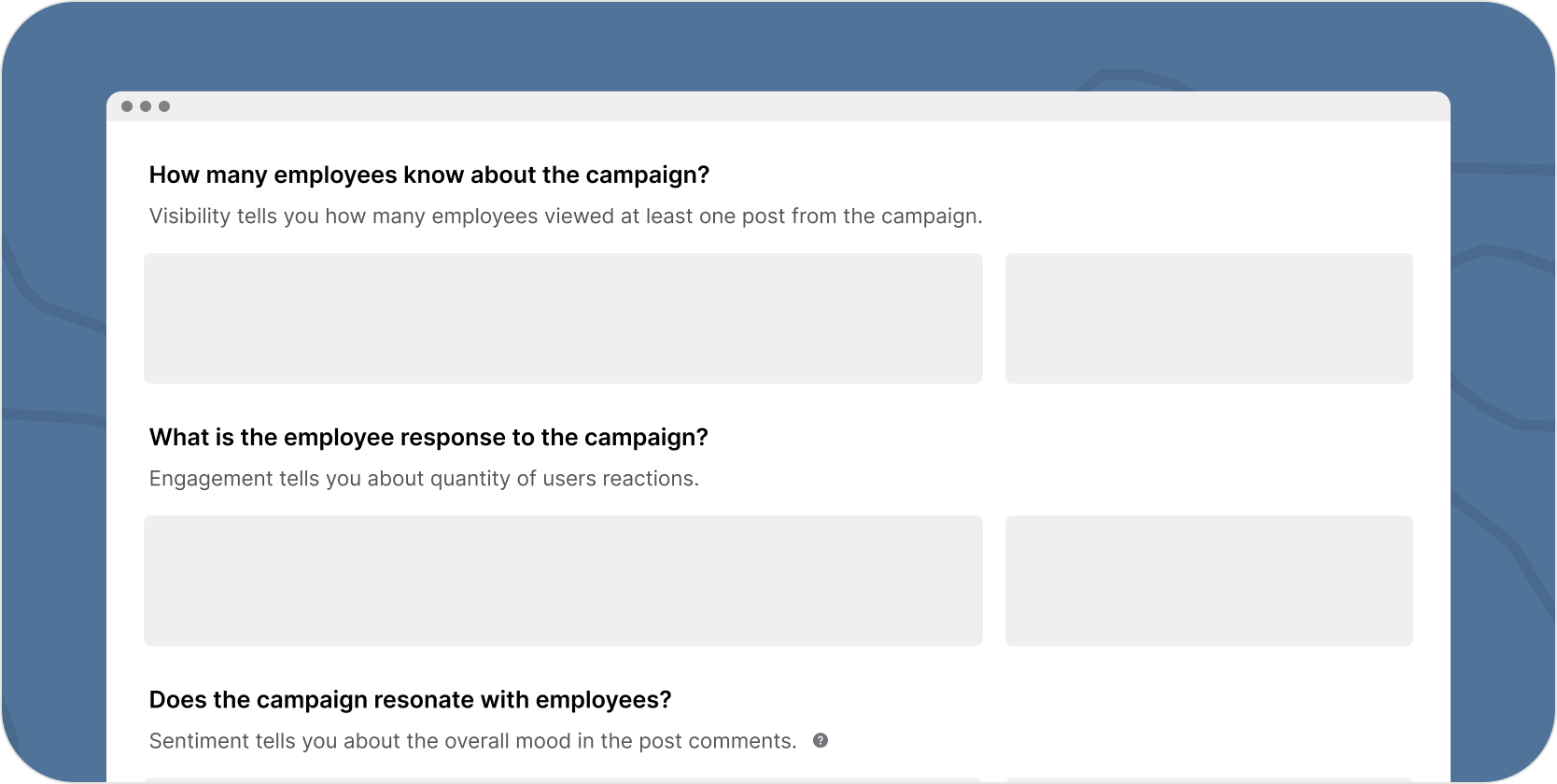

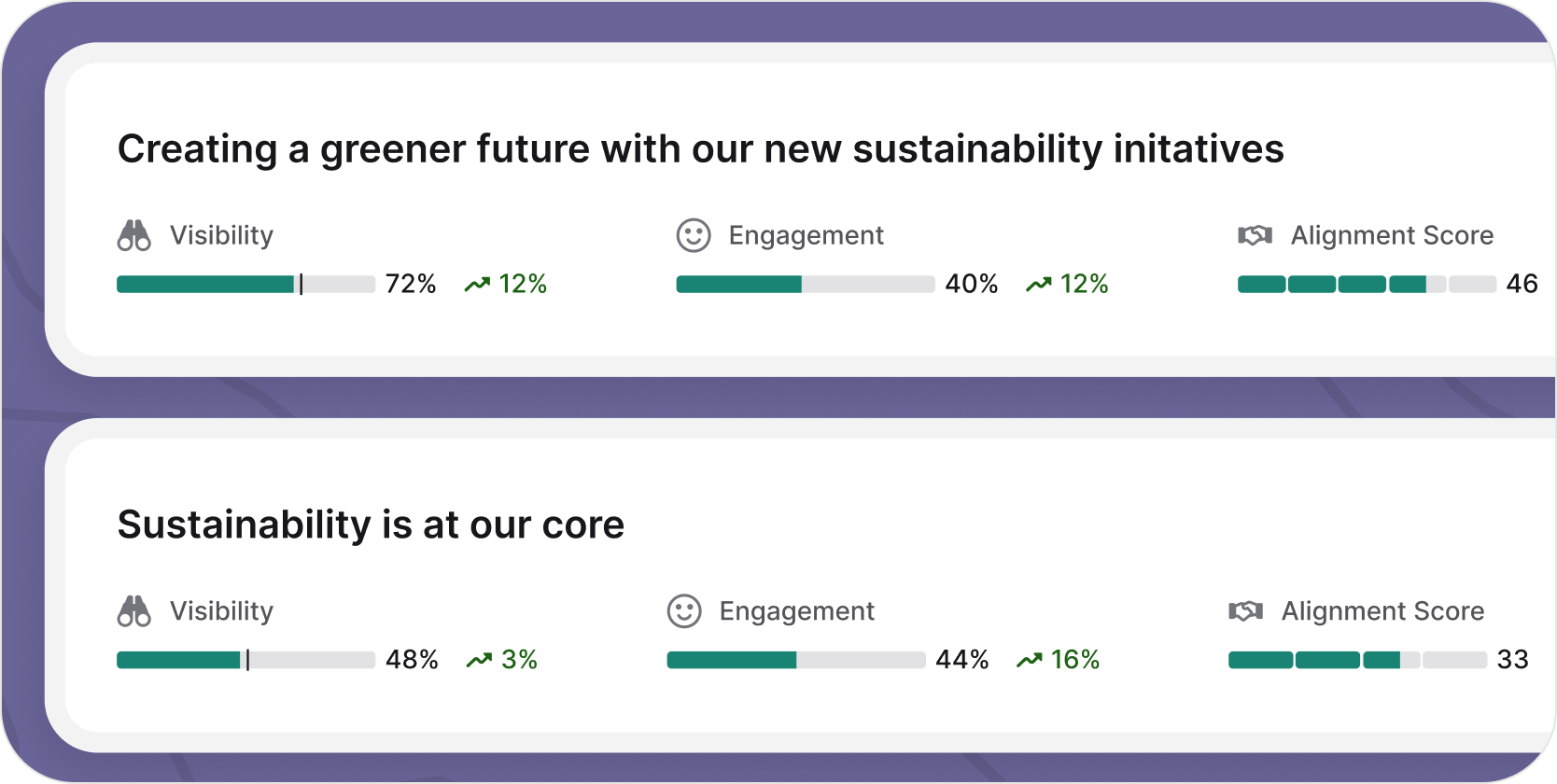

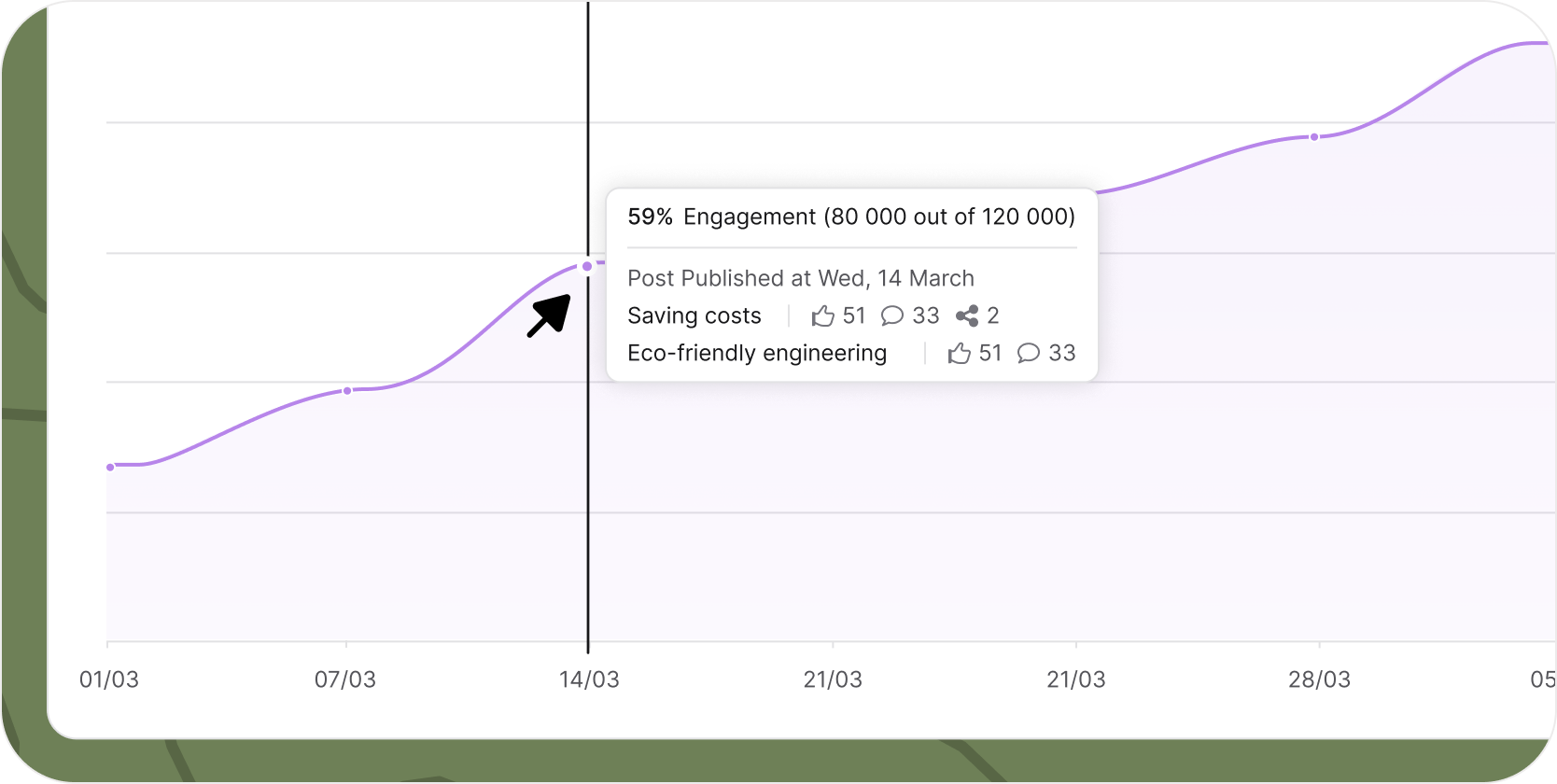

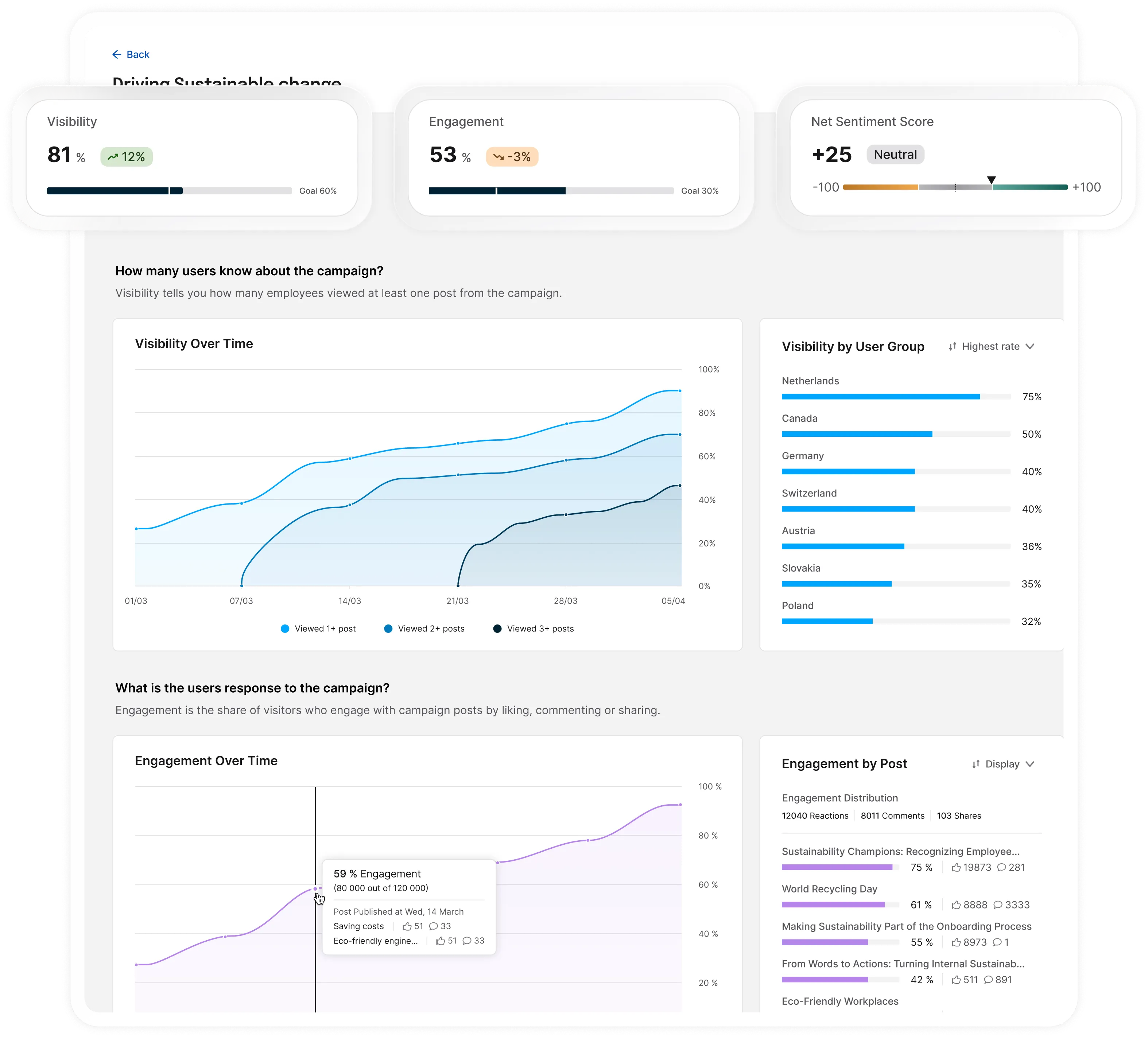

Communication leaders struggle to prove their business impact. Existing analytics tools focus on raw metrics, require data literacy, and fail to translate performance into insights executives understand.

Ahead of Staffbase's flagship conference, we launched an unvalidated analytics concept under extreme time pressure to position the company as a market leader.

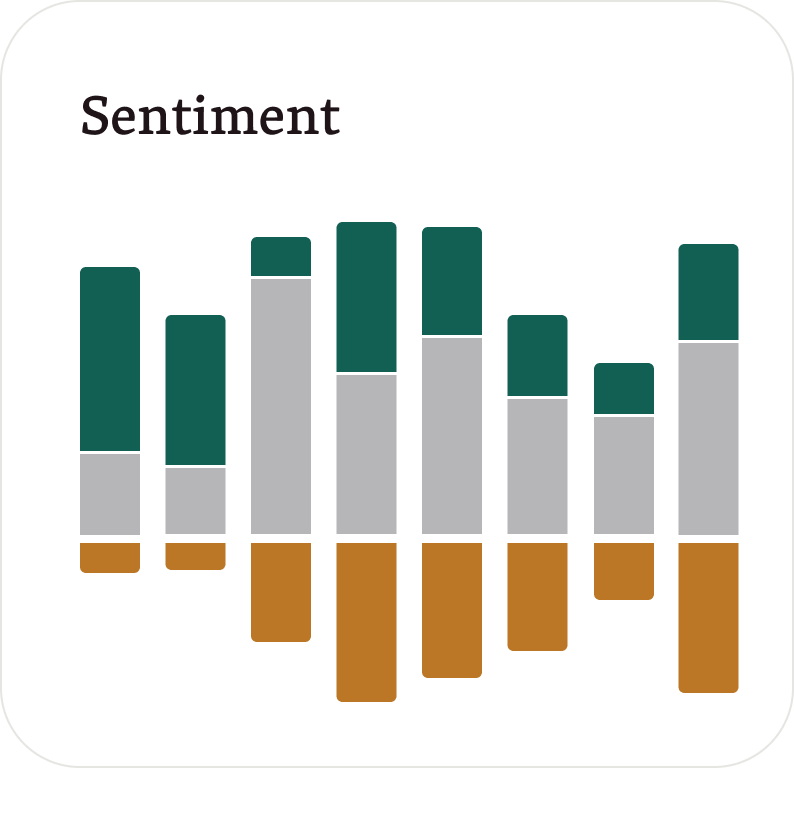

After weak initial market response, we reframed the problem through research and built a campaign-level analytics dashboard that turns communication data into clear narratives. The result became a core selling point for over a year and directly supported enterprise deal closures.